The future of entry-level jobs as AI capabilities progress has been intensely discussed over the last few months. A couple of highlights were Anthropic CEO Dario Amodei stating that “AI could wipe out half of all entry-level white-collar jobs… in the next one to five years” and the results of a landmark Stanford study were “consistent with the hypothesis that generative AI has begun to affect entry-level employment“.

But a negative impact of AI on entry-level jobs is not inevitable. We need to rethink and redesign the nature of entry-level jobs for what is becoming a very different work environment.

The CRAFT framework proposes an approach to build a positive future of work and organizations. You can download the pdf here or read below.

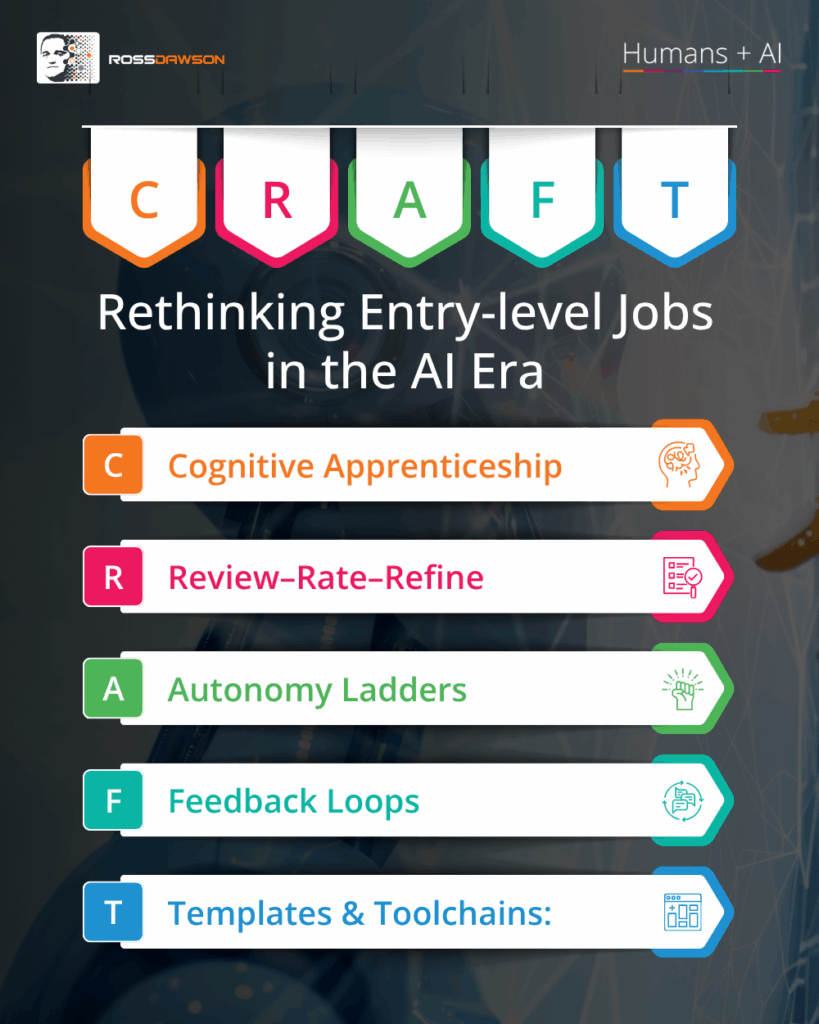

CRAFT: Rethinking entry-level jobs in the AI era

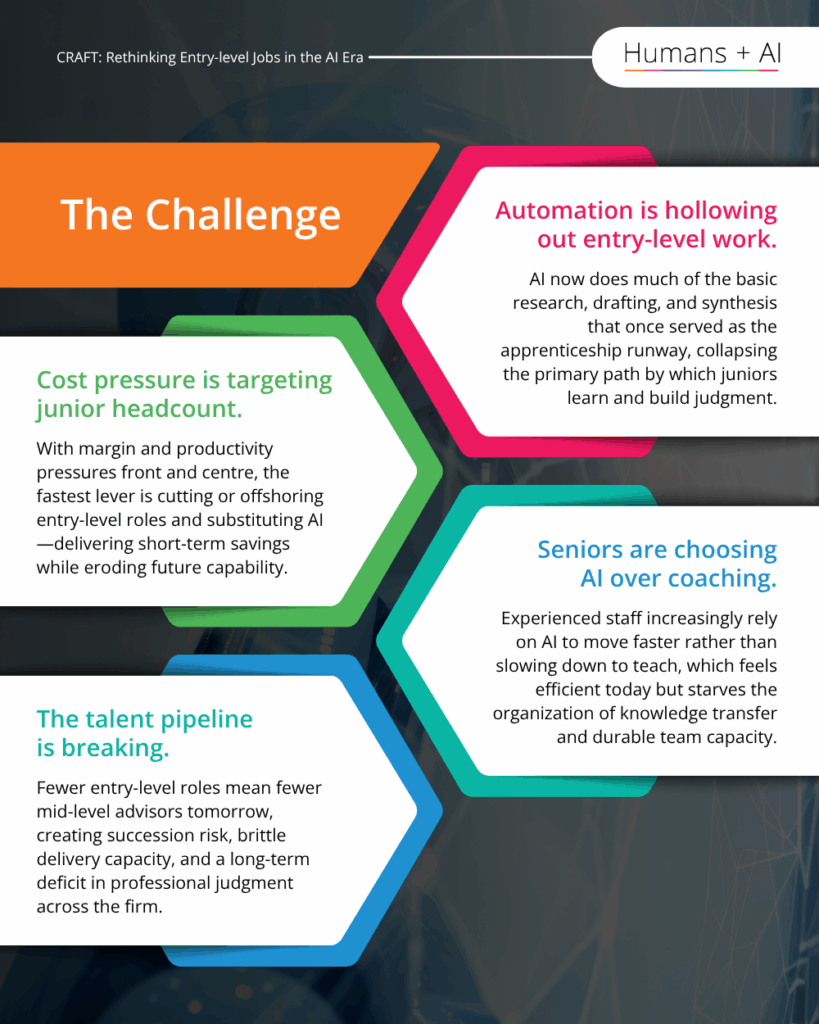

The Challenge

- Automation is hollowing out entry-level work. AI now does much of the basic research, drafting, and synthesis that once served as the apprenticeship runway, collapsing the primary path by which juniors learn and build judgment.

- Cost pressure is targeting junior headcount. With margin and productivity pressures front and centre, the fastest lever is cutting or offshoring entry-level roles and substituting AI—delivering short-term savings while eroding future capability.

- Seniors are choosing AI over coaching. Experienced staff increasingly rely on AI to move faster rather than slowing down to teach, which feels efficient today but starves the organisation of knowledge transfer and durable team capacity.

- The talent pipeline is breaking. Fewer entry-level roles mean fewer mid-level advisors tomorrow, creating succession risk, brittle delivery capacity, and a long-term deficit in professional judgment across the firm.

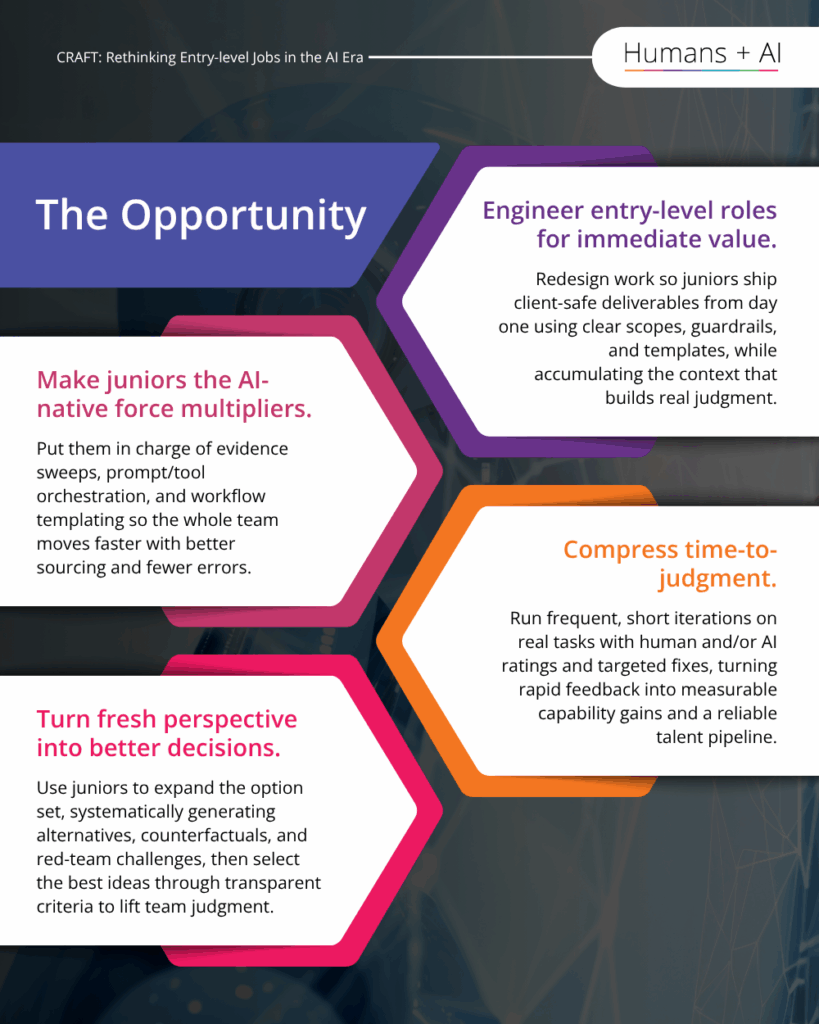

The Opportunity

- Engineer entry-level roles for immediate value. Redesign work so juniors ship client-safe deliverables from day one using clear scopes, guardrails, and templates, while accumulating the context that builds real judgment.

- Make juniors the AI-native force multipliers. Put them in charge of evidence sweeps, prompt/tool orchestration, and workflow templating so the whole team moves faster with better sourcing and fewer errors.

- Compress time-to-judgment. Run frequent, short reps on real tasks with human and/or AI ratings and targeted fixes, turning rapid feedback into measurable capability gains and a reliable talent pipeline.

- Turn fresh perspective into better decisions. Use juniors to expand the option set—systematically generating alternatives, counterfactuals, and red-team challenges—then select the best ideas through transparent criteria to lift team judgment.

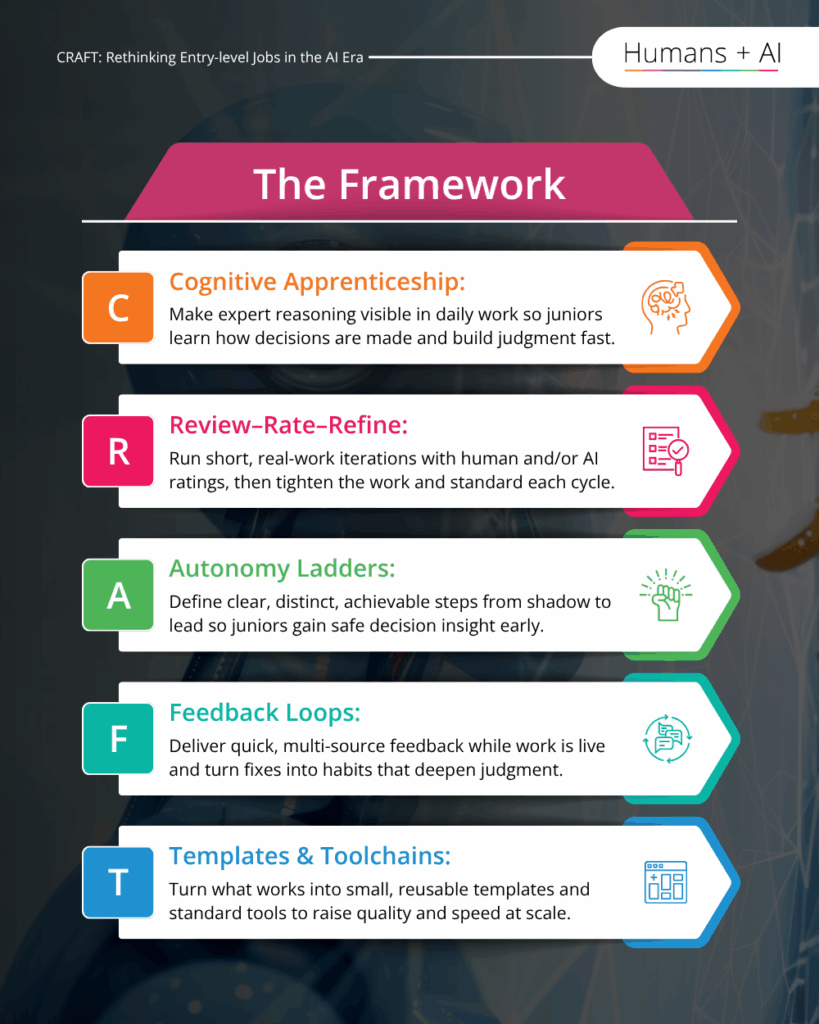

The Framework

- C Cognitive Apprenticeship:

Make expert reasoning visible in daily work so juniors learn how decisions are made and build judgment fast.

- R Review–Rate–Refine:

Run short, real-work reps with human and/or AI ratings, then tighten the work and the standard each cycle.

- A Autonomy Ladders:

Define clear, distinct, achievable steps from shadow to lead so juniors gain safe decision points early.

- F Feedback Loops:

Deliver quick, multi-source feedback while work is live and turn fixes into habits that deepen judgment.

- T Templates & Toolchains:

Turn what works into small, reusable templates and standard tools to raise quality and speed at scale.

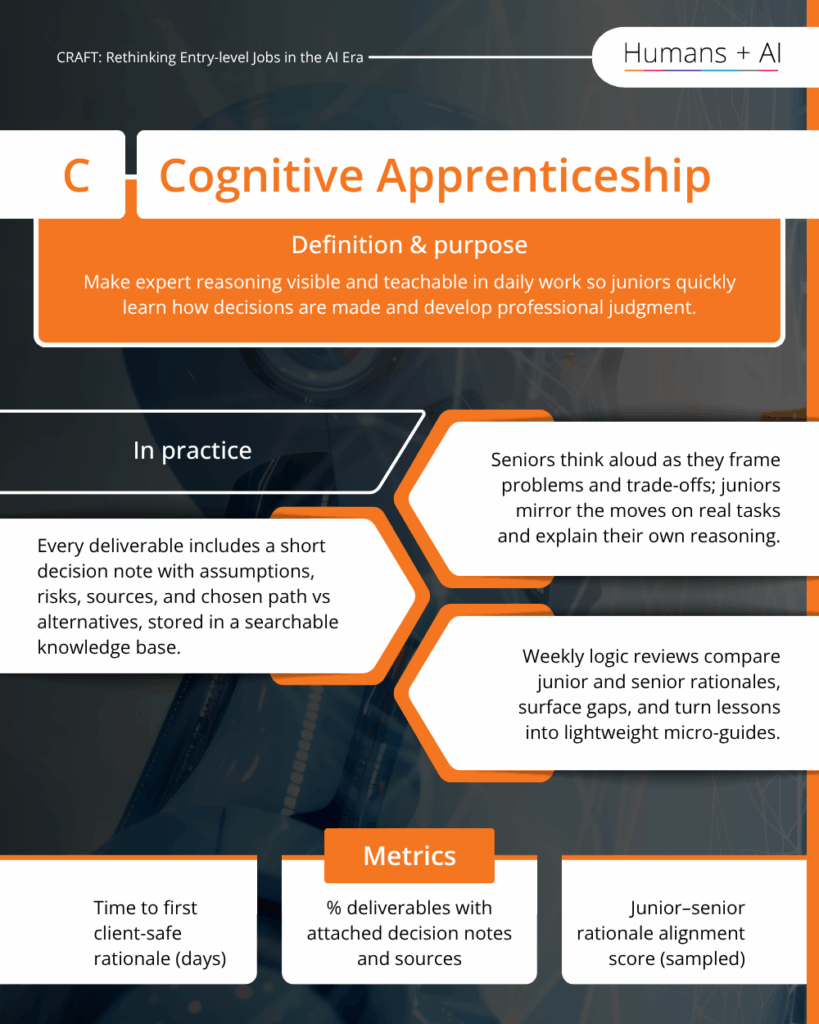

C — Cognitive Apprenticeship

Definition & purpose

Make expert reasoning visible and teachable in daily work so juniors quickly learn how decisions are made and develop professional judgment.

In practice

- Seniors think aloud as they frame problems and trade-offs; juniors mirror the moves on real tasks and explain their own reasoning.

- Every deliverable includes a short decision note with assumptions, risks, sources, and chosen path vs alternatives, stored in a searchable knowledge base.

- Weekly logic reviews compare junior and senior rationales, surface gaps, and turn lessons into lightweight micro-guides.

Metrics

- Time to first client-safe rationale (days)

- % deliverables with attached decision notes and sources

- Junior–senior rationale alignment score (sampled)

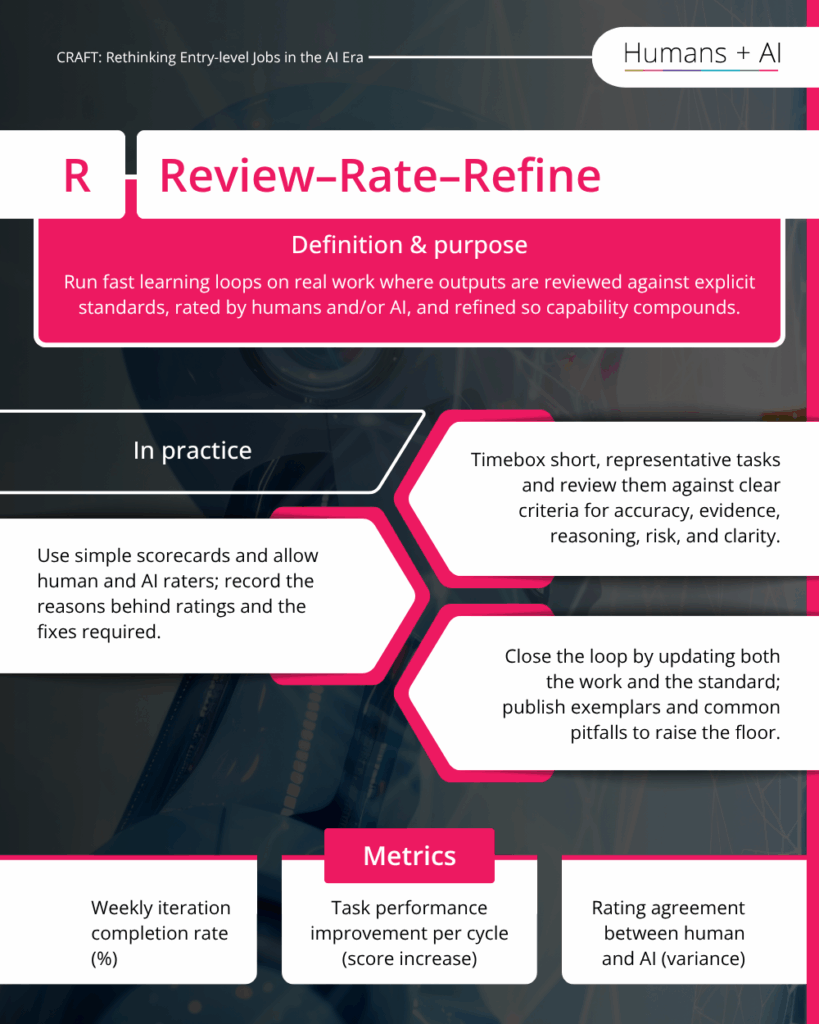

R — Review–Rate–Refine

Definition & purpose

Run fast learning loops on real work where outputs are reviewed against explicit standards, rated by humans and/or AI, and refined so capability compounds.

In practice

- Timebox short, representative tasks and review them against clear criteria for accuracy, evidence, reasoning, risk, and clarity.

- Use simple scorecards and allow human and AI raters; record the reasons behind ratings and the fixes required.

- Close the loop by updating both the work and the standard; publish exemplars and common pitfalls to raise the floor.

Metrics

- Weekly rep completion rate (%)

- Improvement delta per task over two cycles (score increase)

- Rating agreement between human and AI (variance)

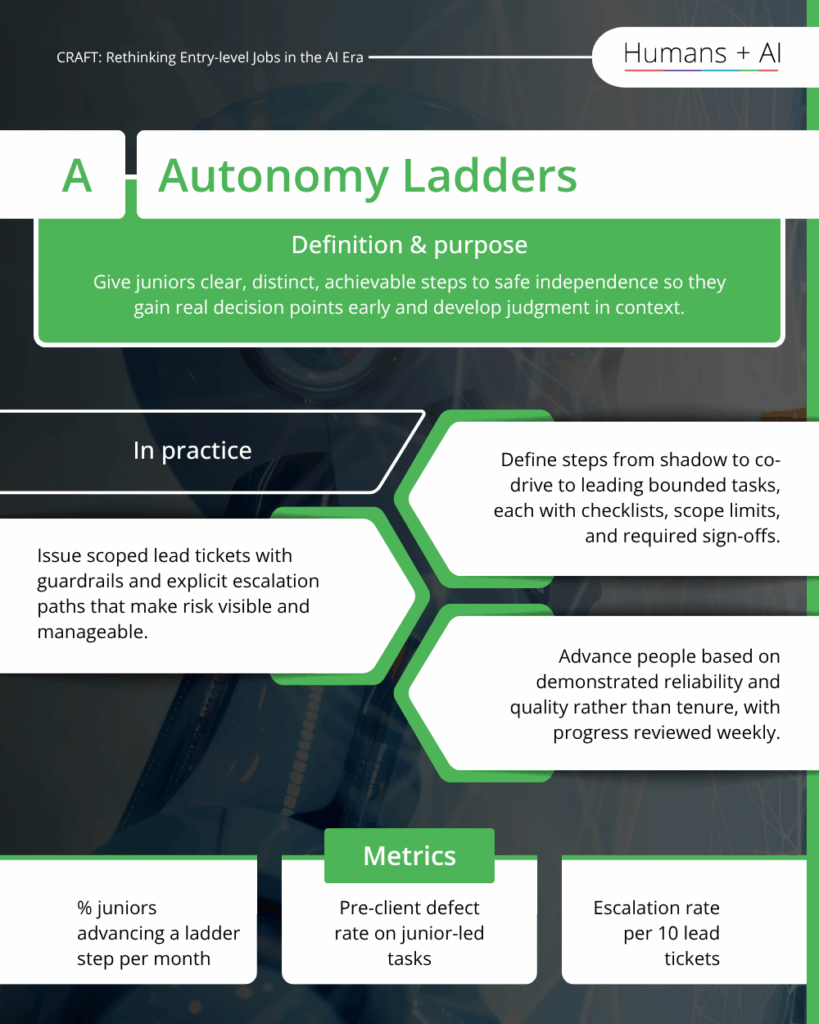

A — Autonomy Ladders

Definition & purpose

Give juniors clear, distinct, achievable steps to safe independence so they gain real decision points early and develop judgment in context.

In practice

- Define steps from shadow to co-drive to leading bounded tasks, each with checklists, scope limits, and required sign-offs.

- Issue scoped lead tickets with guardrails and explicit escalation paths that make risk visible and manageable.

- Advance people based on demonstrated reliability and quality rather than tenure, with progress reviewed weekly.

Metrics

- % juniors advancing a ladder step per month

- Pre-client defect rate on junior-led tasks

- Escalation rate per 10 lead tickets

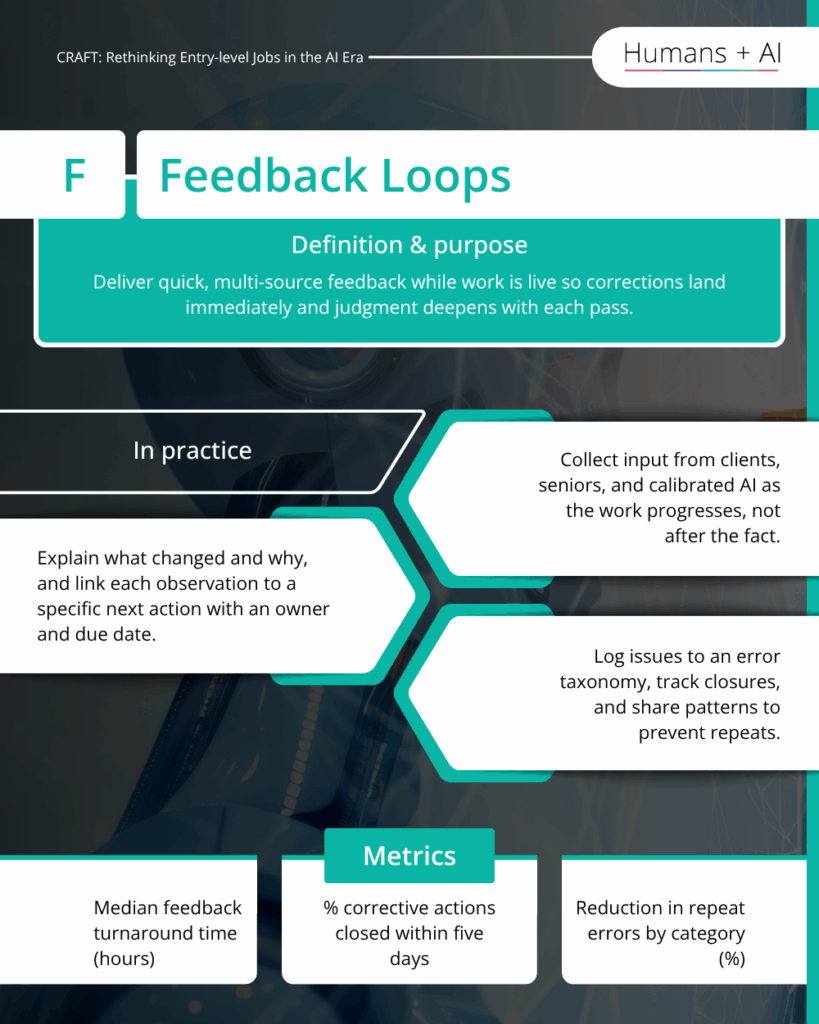

F — Feedback Loops

Definition & purpose

Deliver quick, multi-source feedback while work is live so corrections land immediately and judgment deepens with each pass.

In practice

- Collect input from clients, seniors, and calibrated AI as the work progresses, not after the fact.

- Explain what changed and why, and link each observation to a specific next action with an owner and due date.

- Log issues to an error taxonomy, track closures, and share patterns to prevent repeats.

Metrics

- Median feedback turnaround time (hours)

- % corrective actions closed within five days

- Reduction in repeat errors by category (%)

T — Templates & Toolchains

Definition & purpose

Turn what works into small, reusable templates and standard toolchains so quality rises, speed improves, and learning compounds.

In practice

- Convert accepted deliverables into micro-templates with steps, examples, and checks that others can reuse.

- Maintain a governed template registry and a standard toolchain so reuse is easy and audit-ready across teams.

- Review templates weekly; retire weak ones, promote proven ones, and measure adoption on live work.

Metrics

- Template reuse rate on eligible tasks (%)

- Time saved per templated task (minutes)

- Number of merged template updates per month

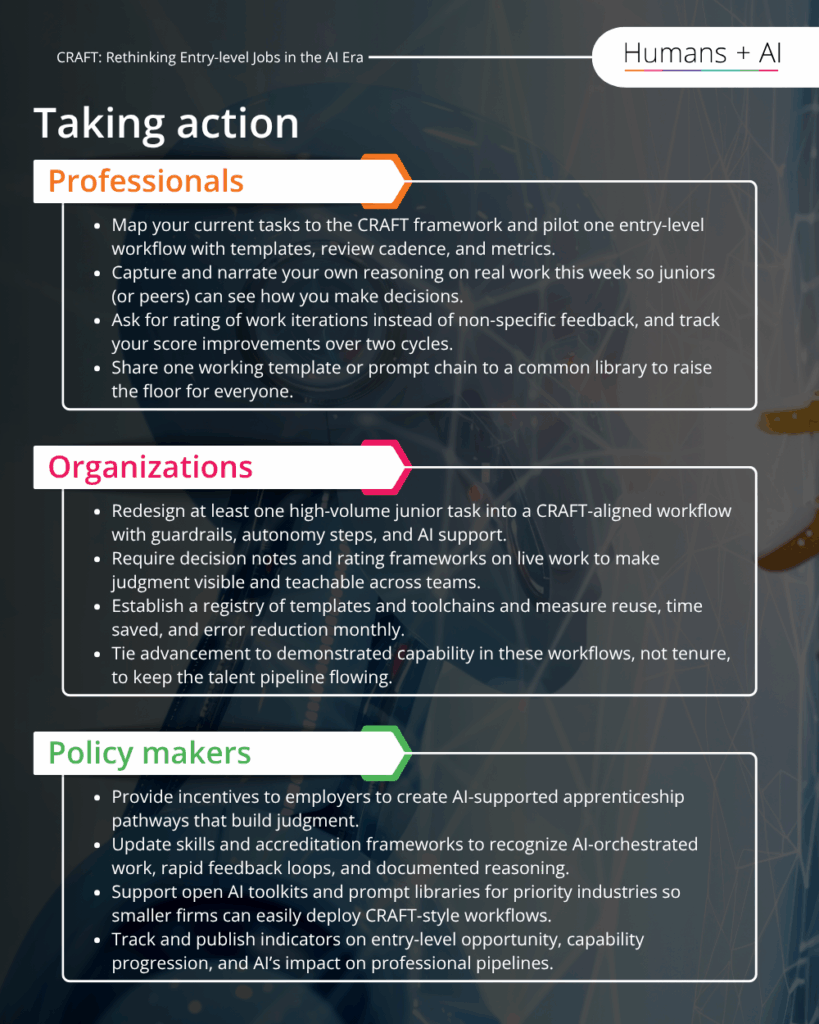

Taking action

(All three below on the one page)

Professionals

- Map your current tasks to the CRAFT framework and pilot one entry-level workflow with templates, review cadence, and metrics.

- Capture and narrate your own reasoning on real work this week so juniors (or peers) can see how you make decisions.

- Ask for rating of work iterations instead of non-specific feedback, and track your score improvements over two cycles.

- Share one working template or prompt chain to a common library to raise the floor for everyone.

Organizations

- Redesign at least one high-volume junior task into a CRAFT-aligned workflow with guardrails, autonomy steps, and AI support.

- Require decision notes and rating frameworks on live work to make judgment visible and teachable across teams.

- Establish a registry of templates and toolchains and measure reuse, time saved, and error reduction monthly.

- Tie advancement to demonstrated capability in these workflows, not tenure, to keep the talent pipeline flowing.

Policy makers

- Provide incentives to employers to create AI-supported apprenticeship pathways that build judgment.

- Update skills and accreditation frameworks to recognize AI-orchestrated work, rapid feedback loops, and documented reasoning.

- Support open AI toolkits and prompt libraries for priority industries so smaller firms can easily deploy CRAFT-style workflows.

- Track and publish indicators on entry-level opportunity, capability progression, and AI’s impact on professional pipelines.